Technical SEO for UAE Businesses in 2026 — AI Crawlers, Schema & Core Web Vitals

Home / Blogs / SEO / Technical SEO for UAE Businesses in 2026 — AI Crawlers, Schema & Core Web Vitals

Topic

Category

Author

Date

Google’s bot isn’t the only crawler that matters anymore.

By 2026, your website is being read — and judged — by a new generation of AI crawlers: OAI-SearchBot from OpenAI, PerplexityBot, Google’s AI Overview indexing system, and the retrieval layers behind every generative AI platform your potential customers are using.

These bots behave differently from traditional search crawlers. They make more request errors. They struggle with poorly structured HTML. They can’t extract meaning from content that isn’t semantically clear. And if your robots.txt file inadvertently blocks them — which is more common than you’d think — you’re invisible to the AI-generated answers your competitors are already appearing in.

This guide covers the full technical SEO stack for UAE businesses in 2026 — from the fundamentals that haven’t changed to the AI-specific configurations most agencies haven’t caught up with yet.

Make Sure AI Crawlers Can Access Your Site

The most common and immediately fixable technical error UAE businesses make in 2026 is accidentally blocking AI crawlers in their robots.txt file.

Your robots.txt file controls which bots can crawl which parts of your website. If it contains blanket Disallow rules, or if the default configuration of your CMS restricts non-Google user agents, you may be completely invisible to every AI platform that matters — even if your Google rankings are strong.

Check your robots.txt file at yoursite.ae/robots.txt. The minimum configuration for AI visibility:

User-agent: *

Allow: /

Disallow: /wp-admin/

Disallow: /wp-login.php

Disallow: /wp-json/

User-agent: OAI-SearchBot

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: Googlebot

Allow: /

Sitemap: https://fictoralabs.ae/sitemap_index.xml

Explicitly allowing these agents is the difference between appearing in ChatGPT’s recommendations for “best marketing agency in Dubai” — and being completely absent from them. Cross-reference your robots.txt against any page-level noindex tags that might be silently blocking key service pages from AI indexing.

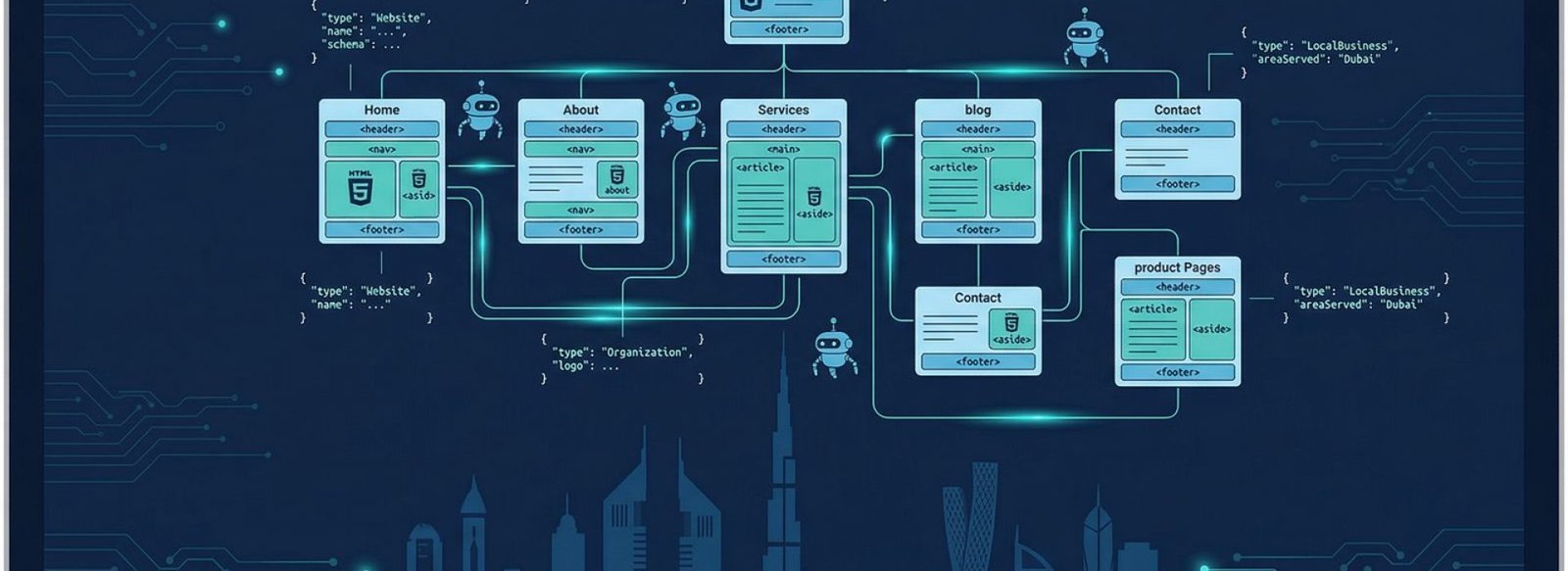

HTML5 Semantic Structure — How AI Systems Read Your Pages

AI systems don’t just read your text. They parse your page structure to understand the hierarchy and relationships between ideas on each page. A flat wall of content inside generic <div> elements is far less extractable than content using proper HTML5 semantic markup.

The minimum structure every page needs:

<main>

<article>

<h1>Primary topic of the page</h1>

<section>

<h2>Subtopic</h2>

<p>Content that answers a specific question...</p>

</section>

<section>

<h2>Another subtopic</h2>

<p>More content...</p>

</section>

</article>

</main>

Three rules for UAE business websites:

Strict H1–H3 hierarchy — one H1 per page, H2s for major sections, H3s for subsections within those sections. Never skip a heading level. AI systems use heading structure to build a semantic map of your page’s content and identify what each section is answering.

<nav> and <main> elements explicitly defined — these tell crawlers and AI systems where your navigation ends and your actual content begins. Many WordPress/Elementor sites don’t output these correctly without checking theme settings.

speakable schema property for key definitions — for paragraphs or summaries you want voice assistants to read aloud, the speakable property in your JSON-LD directly signals to Google and AI systems that this content is suitable for audio output. It’s underused in the UAE market and represents a genuine AEO advantage.

Core Web Vitals — Still Critical, Still Misunderstood

Google’s Core Web Vitals remain a direct ranking factor and act as a quality signal for AI platforms that use web quality as a citation filter. A slow, unstable website is less likely to be cited by AI systems — regardless of how good the content is.

- LCP — Largest Contentful Paint — target under 2.5 seconds

- CLS — Cumulative Layout Shift — target under 0.1

- INP — Interaction to Next Paint — target under 200ms

LCP — Largest Contentful Paint — target under 2.5 seconds

The most common LCP failure on UAE business websites is uncompressed hero images. A 2MB JPEG loading as the main hero element will consistently fail LCP on mobile. The fix:

- Convert all images to WebP format — typically 30–50% smaller than JPEG at the same quality

- Set explicit

widthandheightattributes on all images so the browser doesn’t need to wait for the image to calculate page layout - Use

loading="lazy"on all below-the-fold images — but critically, never on the hero image, which needs to load immediately

CLS — Cumulative Layout Shift — target under 0.1

Layout shifts are most commonly caused by images without specified dimensions, web fonts loading late and causing text to reflow, or third-party widgets injecting content after the initial page load. On Elementor-built sites specifically, check that all image widgets have explicit dimensions set and that font loading uses font-display: swap.

INP — Interaction to Next Paint — target under 200ms

Render-blocking JavaScript is the primary cause of INP failures. Audit your WordPress plugin load order — every plugin that loads JavaScript on the front end adds to your INP. Defer any scripts that don’t need to execute on initial page render. LiteSpeed Cache and similar caching plugins handle some of this automatically but rarely catch everything.

A UAE construction firm that prioritised Core Web Vitals fixes alongside schema markup implementation saw a 310% increase in organic traffic and began appearing in AI Overviews for local fit-out service queries within 75 days.

Schema Markup - The Language AI Systems Speak

Schema markup is structured data that tells AI systems exactly what your content is about in machine-readable language. Without it, AI platforms have to infer meaning from your text alone — which introduces ambiguity. With it, you provide explicit context that directly influences how your content is processed, cited, and surfaced.

The priority schema types for UAE businesses in 2026:

Organization — establishes your entity with AI systems. Includes your name, URL, logo, founding date, address, phone, social profiles, and awards. This is how ChatGPT, Gemini, and Perplexity build their knowledge of who your business is. Without it, they’re guessing.

LocalBusiness — critical for “near me” searches and Google Maps visibility. Includes your NAP (Name, Address, Phone), opening hours, price range, and service area. For UAE businesses, ensure addressCountry is set to AE and prices reference AED.

FAQPage — each Q&A pair you mark up becomes a direct candidate for AI Overviews and featured snippets. FAQPage schema has been shown to improve AI visibility by up to 40% for the questions it covers. Every service page and blog post with a FAQ section should have this implemented.

Service — describes each service you offer, who it’s for, what it covers, what it costs, and what geographic area it serves. This connects your specific services to your Organization entity and makes it explicit to AI systems what your business actually does.

BreadcrumbList — tells crawlers and AI systems where each page sits in your site hierarchy. Essential for service pages that sit at /services/service-name/ — without it, AI systems have less context about how the page relates to the overall site.

All schema should be implemented as <script type="application/ld+json"> blocks placed in the <head> of each page — not inline in the body. For WordPress sites, Code Snippets plugin with page-specific conditions is the cleanest implementation method without requiring theme file edits.

XML Sitemap - What to Include and What to Block

Your XML sitemap is the map you hand to crawlers. What you include and exclude directly determines which pages get indexed — by Google and by AI platforms.

What to Include:

- All public service pages

- All published blog posts

- About, Contact, Portfolio pages

- Any location-specific landing pages

What to Exclude:

- /wp-admin/

- /wp-login.php

- /wp-json/

- Any thank-you or confirmation pages

- Paginated archive pages beyond page 2

- Tag and author archive pages that add no unique value

For Rank Math users, verify your sitemap is live at yoursite.ae/sitemap_index.xml and submit it to Google Search Console. Also verify that the sitemap URL is correctly referenced in your robots.txt file — a sitemap that isn’t referenced in robots.txt is technically discoverable but frequently missed by AI crawlers.

Hreflang — Essential for UAE Bilingual Sites

If your website serves both Arabic and English audiences, hreflang tags are non-negotiable. They tell search engines and AI crawlers which language version of a page to serve to which audience — preventing duplicate content penalties and ensuring Arabic-speaking users see the Arabic version in both Google Search and AI-generated responses.

Basic hreflang implementation in <head>:

<link rel="alternate" hreflang="en-ae" href="https://yoursite.ae/page/" />

<link rel="alternate" hreflang="ar-ae" href="https://yoursite.ae/ar/page/" />

<link rel="alternate" hreflang="x-default" href="https://yoursite.ae/page/" />

Combine hreflang with your Organization schema to verify your business as a single entity across both language versions. This reduces semantic confusion for AI systems parsing your bilingual content — ensuring both versions are attributed to the same brand rather than treated as separate, potentially conflicting sources.

Key rules for UAE business websites:

Every blog post should link to at least one service page within the first three paragraphs — not buried at the bottom as a CTA. Anchor text should be descriptive and keyword-relevant, not “click here” or “read more.” No published page should be an orphan — every page needs at least one internal link pointing to it, or it’s effectively invisible to crawlers and AI systems that follow link graphs.

Technical Audit Checklist — Run This Monthly

Check

AI crawlers allowed in robots.txt

How to verify:

View yoursite.ae/robots.txt directly

Check:

XML sitemap live and submitted

How to verify:

Google Search Console → Sitemaps

Check:

No accidental noindex on key pages

How to verify

Rank Math → Site-wide analysis

Check:

Core Web Vitals passing

How to verify:

Google PageSpeed Insights, mobile view

Check:

Schema present on all service pages

How to verify:

Google Rich Results Test

Check:Hreflang correct on bilingual pages

How to Verify:

Chrome DevTools → head inspection

Check:

No broken internal links

How to Verify:

Screaming Frog or Rank Math site audit

Check:

Featured images have alt text

How to Verify:

WordPress Media Library

Running this audit monthly takes under an hour and catches the issues that silently erode your rankings and AI citation potential between campaigns.

Ready for a Technical SEO Audit?

Fictora Labs conducts full technical SEO audits for UAE businesses — covering AI crawler access, Core Web Vitals, schema implementation, hreflang configuration, and everything in between. Every issue found is prioritised by impact and fixed systematically.

Fictora Labs is a DFHQ-recognised SEO, AEO and GEO agency based in Dubai, UAE. Our AI workflow automation service also handles schema updates, sitemap management, and reporting automatically for businesses that want their technical stack to run without manual intervention.

Recent Services

LET'S CREATE SOMETHING EXTRAORDINARY TOGETHER.

JOIN OUR NEWSLETTER & GET EXCLUSIVE INSIGHTS, TIPS, & UPDATES STRAIGHT TO YOUR INBOX

Join our newsletter and get exclusive insights, tips, and updates straight to your inbox.